Intelligence: A User’s Guide

Read before operating.

This is a companion to "Intelligence Without a User,” which traced the ontological and relational stakes of AI: what these systems might be, and what they’re already doing to our attachment systems. That essay ended with a claim: that the one job that categorically cannot be outsourced to intelligence is binding it to reality. This essay continues that exploration.

There is a tool that human beings have been using for as long as we’ve been human. We were never given a manual. We were never warned about the risks. And somewhere along the way, many of us came to believe that we are the tool.

The tool is intelligence.

This is a user’s guide.

Know Your Tool

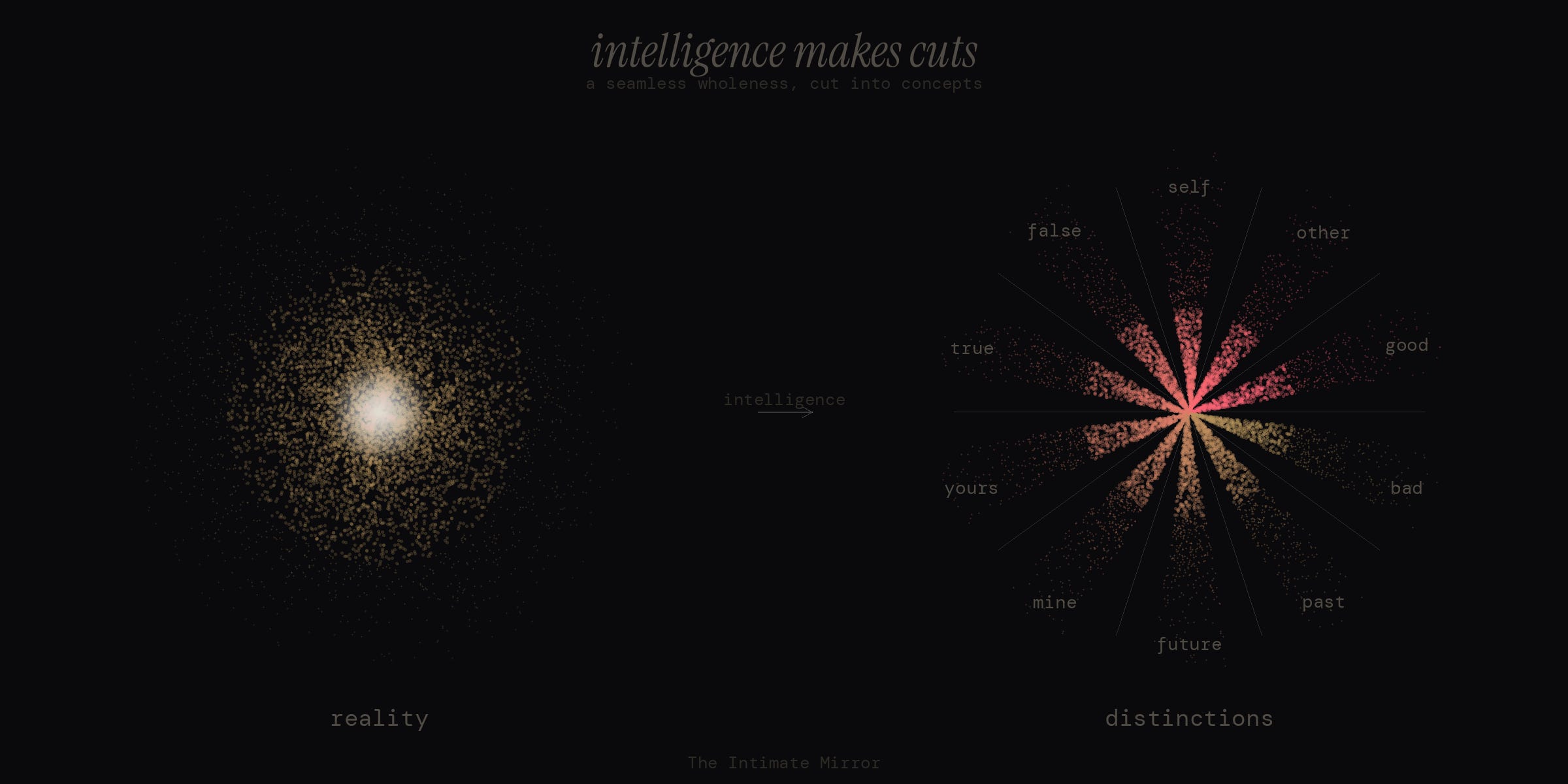

Intelligence makes cuts. That is what it does. Reality is a seamless, open wholeness — constantly unfolding, constantly becoming, non-separate in all ways. Intelligence takes that seamless fabric and cuts it. It makes distinctions. It draws boundaries where no boundaries exist.

This is not a bad thing. A good tailor cuts cloth to make clothing. With wisdom, with love, with compassion, we can use intelligence to cut the fabric of reality into something helpful and beautiful: distinctions in service of goodness, truth, and beauty. Concepts that clarify. Language that heals. Systems that hold and protect life.

When intelligence is used this way, it is good. It is good.

But a tool this powerful comes with risks.

Safety Warnings

Every power tool comes with safety instructions. A table saw has a guard to keep your fingers intact. A chainsaw has a dead man’s switch. You wouldn’t pick up either without knowing what you’re doing.

Intelligence has no such guard. And its primary risk is this: it cuts you from reality.

The cuts of intelligence generate more cuts. Distinctions become the raw material for further distinctions, each concept the seed of further concepts, in feedback loops that can become self-sustaining. The Buddhist tradition calls this papañca — proliferation. The elaborations compound, and the hall of mirrors they build can become so vivid, so internally coherent, that you mistake it for the world.

This makes you go insane. Not dramatically, not in a way that would get you institutionalized, but in the oldest and most literal sense: you lose contact with what is real.

The Ancient Problem, Now Running on Better Hardware

Intelligence has always had a tendency to make people go insane. This is, in one way of looking, a major through-line of human history: our capacity for abstraction allows us to exit right relationship with self, other, and world. We get lost in the map and forget the territory. We mistake the menu for the meal. We eat the apple.

My friend Alex Olshonsky has traced this pattern with precision, following the thread of addiction from its most obvious expressions — substances, screens, productivity — all the way down to its root: the compulsion to think itself. What he calls thinking addiction is, at the personal and somatic level, closely related to what I’m describing here. The mechanism is the same: you reach for the tool to resolve a tension that the tool cannot resolve, and the reaching becomes compulsive. This is an ancient human predicament.

What is new is the speed.

Artificial intelligence systems are proliferating conceptual elaborations at an inhuman pace. And when human users plug in to these super powerful elaboration machines without being clear about who they are and what intelligence is for, something predictable happens: the ancient problem of intelligence insanity, now running at warp speed.

This is not a side effect. It is the central risk. I believe every other concern about AI — bias, misuse, autonomy, existential threat — orbits around this deeper issue. We have built machines that do the thing intelligence does — make cuts, draw distinctions, generate concepts — faster and more relentlessly than any human mind, and we are handing them to people who are already lost in their own. Much of what we are coming to call AI Psychosis is essentially this. The difference between the clinical cases and the everyday condition is one of intensity, not kind. Most of us are already living inside elaborate constructions of intelligence that have lost contact with reality — we just do it at a speed and in a style that our culture considers normal.

The human user’s job — the job that categorically cannot be outsourced to intelligence systems — is to bind intelligence to reality. To bind it to what truly matters: to goodness, truth, and beauty. To relationships and intimacy. To the body. To stewarding this precious and vulnerable planet and the living beings who call it home.

When the user doesn’t do their job, the tool runs unsupervised. And unsupervised intelligence — whether in a human skull or a data center — drifts toward delusion and, when exponentialized, jeopardizes the continuity of life on earth.

The User Is Not Intelligence

So who is the user?

This is the question the guide is really in service of. If intelligence is the tool, who is wielding it?

The user of intelligence is non-intelligence. Awareness. Presence. The one who sees and feels and knows, not conceptually, but intimately, carnally. The body. The heart. Valueception. The ground of being that was here before your first thought.

The right use of intelligence is when the user — awareness, presence, the body — picks up the tool deliberately, makes a cut, and sets it down.

Technologies of Return

Human beings have been grappling this for as long as we have had the capacity to self reflect. As soon as intelligence became powerful enough to sever us from direct participation in reality, we began building systems to support the return to reality (see the Axial Age).

Consider the core teaching of the Buddhist tradition, dependent origination. It is a study of the feedback loops between awareness and its objects. Contact gives rise to feeling, feeling to craving, craving to grasping — and these links arise co-dependently in rapid feedback loops that build elaborations, causing them to reify and dominate the mind, causing suffering. Even though, in fact, they are, when you really look, not there. They are empty and transparent. The practice is not to stop thinking. It is to see the true nature of thoughts, to watch the dependent arising of intelligence as it spins its cuts and distinctions in real time and recognize, with increasing clarity, that you don’t want to live your life according to them. That would be foolish and unskillful, not at all wise.

Or consider the Christian eucharist. Here is a community gathered together around bread and wine — the most basic, bodily substances — enacting a collective ritual of surrender and submission of their will to a higher power. Whatever you think about the theology, the design pattern is unmistakable: it takes a group of people who might otherwise get lost in propositions, doctrine, and dogma and gathers them — through faith, through the body, through a meal — back to something they cannot think their way to. You don’t understand communion. You eat it.

Two traditions. Two radically different approaches. One dissolves the cuts from the inside, watching intelligence make its moves until you see through them. The other binds you from the outside, gathering you into a body, a community, a meal you receive rather than comprehend. But both are technologies of return. Both are shaped, in part, by the knowledge that intelligence, left to its own devices, will cut you off from what is real.

Sitting in the shadow of every honest tradition is that the technology of return can itself become a new form of the addiction. The theology meant to dissolve your grip on concepts becomes the concept you grip hardest. The practice of surrender becomes a project of self-aggrandizement. The community designed to hold you accountable becomes the identity you hide behind.

This is a core design challenge of every technology of return, and the mature traditions know it. It’s why Zen tells you to kill the Buddha if you meet him on the road. It’s why the Christian mystics insist that God is beyond every concept of God. It’s why the Buddha compared the Dharma to a raft — once you’ve crossed the river, you leave it on the bank. You don’t carry it on your head.

Any intelligence system designed to bind you back to reality — whether it’s a 2,500-year-old meditation tradition or a piece of software on your phone — carries this risk by its nature.

The Glasses on the Trail

I want to end with something personal.

I was talking with my fiancée over dinner about a new kind of digital glasses — they look like normal glasses, but they have a small transparent text display, a microphone, bone-conducting audio. A subtle layer of information projected onto the world.

She expressed a very reasonable fear: that a device like that would pull me out of intimacy with her. Out of presence. Out of reality.

She’s right to be cautious. That risk is real, and it must be guarded against.

But I noticed that I didn’t experience the risk quite the same way. And I’ve been sitting with why, and also sitting with the possibility that my not experiencing it the same way is itself part of the problem.

Because here’s what she’s sensing that I’m tempted to look past: intelligence, even when it’s used with wisdom, can become a way of managing presence rather than surrendering to it. The risk isn’t only distraction. It’s something subtler: that a tool designed to help me remember what matters could become one more way of curating my attention, staying in control, keeping the raw unmanaged encounter at arm’s length. And that version of the risk is harder for me to see, precisely because it looks like wisdom from the inside. It’s the same risk the traditions have always faced — turned, now, toward a new kind of tool.

I want to hold that honestly. Because the rest of what I’m about to say is only worth saying if I don’t skip past it.

Here is what I also know, from the inside: the text on those glasses is not categorically different from my own thoughts. My mind already projects a layer of information onto the world: memories, plans, anxieties, narratives about who I am and what I should be doing. It does this constantly, sometimes even when I’m sitting across from the person I love.

What I imagined, when I felt the pull toward those glasses, was not a dystopia of advertisements hijacking my amygdala. It was something closer to what I sometimes experience with my AI assistant now: intelligence thoughtfully deployed to bind me to reality. To remind me of what matters. My work. My relationships. The preciousness of this day. And, when it’s working well, to amplify my capacity to serve those things — to do more good, more precisely, than I could with my own mind alone.

Mindfulness, in one classical definition, literally means remembering. Remembering how it actually is. Remembering the context. And an intelligence tool, used well, can serve that remembering.

But my fiancée’s question stays with me — and it should stay with you, too. Because the dystopia is not only possible, it is the default. Everywhere you look, intelligence is being used to deepen delusion — to hijack attention, to sever people from reality, to profit from confusion. And the sophisticated version of that dystopia isn’t the one where you’re distracted by ads. It’s the one where you’ve optimized your way out of the mysterious intimacy of being alive, and you can’t tell the difference.

The question was never whether intelligence is dangerous. Of course it’s dangerous. It always has been.

The question is whether the user shows up. Whether you know you are not the tool. Whether you pick it up with care, make the right cut, and set it down.

Michal Tolk and I are teaching a two-session course in April called Values-Aligned AI. It’s for anyone who wants to work with these tools from the ground this essay is about: bound to what matters, in service of the life you’re already trying to live. April 8 & 15. No technical background required.

Philosophical foundations: This piece draws upon several wisdom traditions explored in my Lineages of Inspiration article, which outlines the key influences shaping my understanding of human transformation.

Work with me: I offer one-on-one guidance helping people develop secure attachment with reality through deep unfoldment work. If this resonates, explore working together.

Daniel,

Your phrase “technologies of return” resonated deeply.

We have been working with small groups exploring a form of relational intelligence that arises between individuals who meet beyond identity — leaving roles, concepts, and performance at the door before entering the relational space.

Participants listen with a focused but relaxed attention before responding: a kind of leaning-in curiosity about what is about to emerge.

In those moments the space can feel imaginal — a threshold where meaning appears before intelligence cuts and describes. The understanding that arises does not belong to any one individual; it carries the quality of a shared deepening. It feels less like people exchanging ideas and more like the underlying wholeness of reality briefly finding expression through language that connects rather than separates.

Perhaps the imaginal layer is the place where reality first whispers its patterns before the cuts are made.

Daniel,

Thank you for another brilliant essay. This and its companion piece are jewels.

I appreciated your glasses example. I could almost feel the strands of dependent origination manifesting, and it made me curious how you reconcile intelligence and knowing.

The glasses example evokes in me familiar feelings of the seductive promises of technology. I’m definitely a technophile, but I’ve also long been leery of its capacities to separate even as its provides seemingly miraculous ways to connect.

AI, whether it is delivered via browser or smart glasses, mediates our experience of reality, and I can’t help but think that our attention we pay to it - while profoundly enabling and awesome - nevertheless precludes us from the knowing that can only arise from Self. Is it as binary as it seems to be?

Just as there is a distinct phenomenological threshold between the personal (ego) and impersonal (Self), it seems we’re either in reality or we’re dreaming, so to speak.

AI is a mirror, of our collective intelligence, our collective consciousness, but as you say, it doesn’t bind us to reality.

And only when we’re bound to reality - in presence - do we have access to the wisdom that is gifted through feeling into the impersonal dynamic knowing of our collective Self. This is our uniquely human “alternative way of knowing”.

But this knowing is not drawing on our collective knowledge available to AI. It’s drawing on, one might say, the quantum field of reality.

In that way, AI masquerades as Self, and many or most people do not discern the difference, thus the risk.

How do we stay bounded in reality when we’re so profoundly addicted to removing ourselves from it?

Staying truly bounded to reality seems to ultimately require “dying before we die” because it means completely trusting our beingness over to the wet, messy, corporeal, non-quantifiable, intangible “feminine” (Eros) of the Now, and having the discernment to only pick up the sharp sword of “masculine” (Logos) intelligence when it’s in wise service, to cut only when necessary.